发布时间:2025-07-18

发布时间:2025-07-18 点击次数:

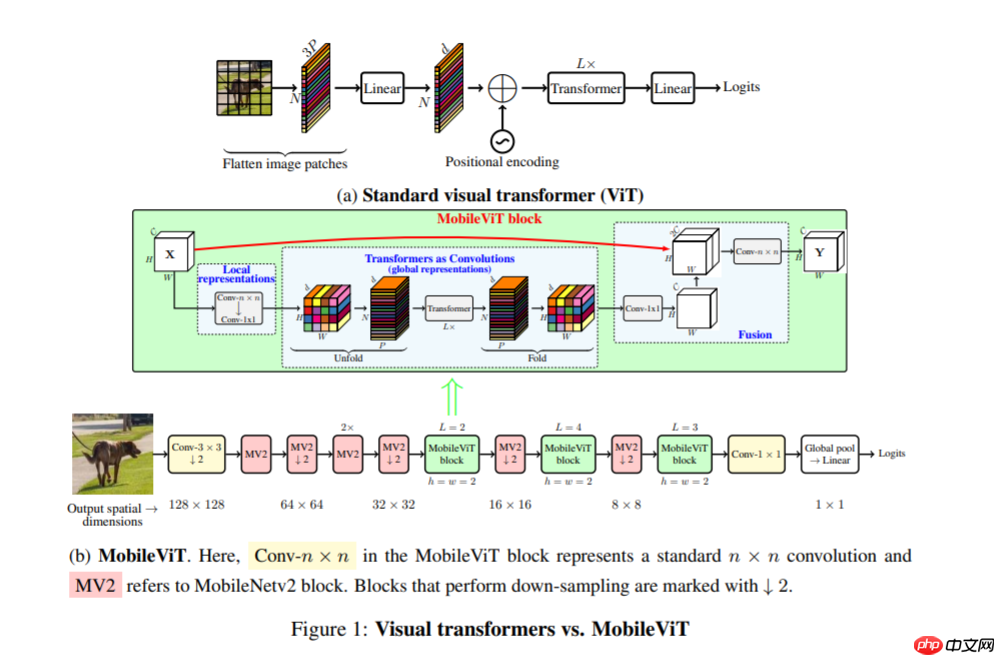

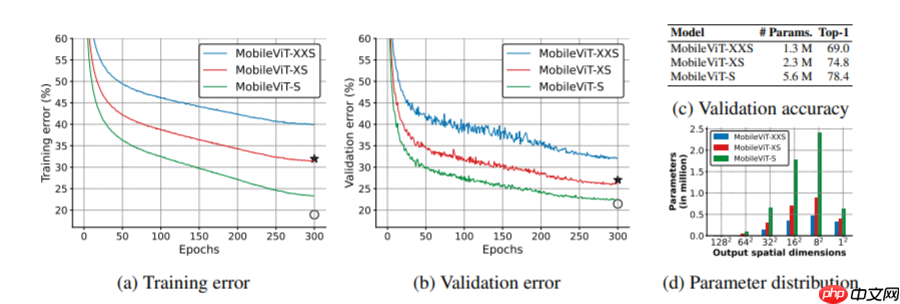

点击次数: 本文介绍了轻量级通用视觉Transformer——MobileViT,它结合CNN与ViT优势,适用于移动设备,性能优于MobileNetV3等网络,且泛化、鲁棒性更佳。文中给出其PaddlePaddle实现代码,定义数据集、数据增强,构建模型,设置优化器等进行训练,并与MobileNetV2做对比实验,验证了MobileViT的有效性。

☞☞☞AI 智能聊天, 问答助手, AI 智能搜索, 免费无限量使用 DeepSeek R1 模型☜☜☜

MobileViT 与 Mobilenet 系列模型一样模型的结构都十分简单

MVM mall 网上购物系统

MVM mall 网上购物系统

采用 php+mysql 数据库方式运行的强大网上商店系统,执行效率高速度快,支持多语言,模板和代码分离,轻松创建属于自己的个性化用户界面 v3.5更新: 1).进一步静态化了活动商品. 2).提供了一些重要UFT-8转换文件 3).修复了除了网银在线支付其它支付显示错误的问题. 4).修改了LOGO广告管理,增加LOGO链接后主页LOGO路径错误的问题 5).修改了公告无法发布的问题,可能是打压

0

查看详情

0

查看详情

In [ ]

In [ ]

#!unzip -oq data/data110994/work.zip -d work/In [ ]

import paddle

paddle.seed(8888)import numpy as npfrom typing import Callable#参数配置config_parameters = { "class_dim": 10, #分类数

"target_path":"/home/aistudio/work/",

'train_image_dir': '/home/aistudio/work/trainImages', 'eval_image_dir': '/home/aistudio/work/evalImages', 'epochs':20, 'batch_size': 64, 'lr': 0.01}#数据集的定义class TowerDataset(paddle.io.Dataset):

"""

步骤一:继承paddle.io.Dataset类

"""

def __init__(self, transforms: Callable, mode: str ='train'):

"""

步骤二:实现构造函数,定义数据读取方式

"""

super(TowerDataset, self).__init__()

sel f.mode = mode

self.transforms = transforms

train_image_dir = config_parameters['train_image_dir']

eval_image_dir = config_parameters['eval_image_dir']

train_data_folder = paddle.vision.DatasetFolder(train_image_dir)

eval_data_folder = paddle.vision.DatasetFolder(eval_image_dir)

if self.mode == 'train':

self.data = train_data_folder elif self.mode == 'eval':

self.data = eval_data_folder def __getitem__(self, index):

"""

步骤三:实现__getitem__方法,定义指定index时如何获取数据,并返回单条数据(训练数据,对应的标签)

"""

data = np.array(self.data[index][0]).astype('float32')

data = self.transforms(data)

label = np.array([self.data[index][1]]).astype('int64')

return data, label

def __len__(self):

"""

步骤四:实现__len__方法,返回数据集总数目

"""

return len(self.data)from paddle.vision import transforms as T#数据增强transform_train =T.Compose([T.Resize((256,256)), #T.RandomVerticalFlip(10),

#T.RandomHorizontalFlip(10),

T.RandomRotation(10),

T.Transpose(),

T.Normalize(mean=[0, 0, 0], # 像素值归一化

std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor

T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差

std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel]

])

transform_eval =T.Compose([ T.Resize((256,256)),

T.Transpose(),

T.Normalize(mean=[0, 0, 0], # 像素值归一化

std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor

T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差

std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel]

])

train_dataset = TowerDataset(mode='train',transforms=transform_train)

eval_dataset = TowerDataset(mode='eval', transforms=transform_eval )#数据异步加载train_loader = paddle.io.DataLoader(train_dataset,

places=paddle.CUDAPlace(0),

batch_size=16,

shuffle=True, #num_workers=2,

#use_shared_memory=True

)

eval_loader = paddle.io.DataLoader (eval_dataset,

places=paddle.CUDAPlace(0),

batch_size=16, #num_workers=2,

#use_shared_memory=True

)print('训练集样本量: {},验证集样本量: {}'.format(len(train_loader), len(eval_loader)))

f.mode = mode

self.transforms = transforms

train_image_dir = config_parameters['train_image_dir']

eval_image_dir = config_parameters['eval_image_dir']

train_data_folder = paddle.vision.DatasetFolder(train_image_dir)

eval_data_folder = paddle.vision.DatasetFolder(eval_image_dir)

if self.mode == 'train':

self.data = train_data_folder elif self.mode == 'eval':

self.data = eval_data_folder def __getitem__(self, index):

"""

步骤三:实现__getitem__方法,定义指定index时如何获取数据,并返回单条数据(训练数据,对应的标签)

"""

data = np.array(self.data[index][0]).astype('float32')

data = self.transforms(data)

label = np.array([self.data[index][1]]).astype('int64')

return data, label

def __len__(self):

"""

步骤四:实现__len__方法,返回数据集总数目

"""

return len(self.data)from paddle.vision import transforms as T#数据增强transform_train =T.Compose([T.Resize((256,256)), #T.RandomVerticalFlip(10),

#T.RandomHorizontalFlip(10),

T.RandomRotation(10),

T.Transpose(),

T.Normalize(mean=[0, 0, 0], # 像素值归一化

std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor

T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差

std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel]

])

transform_eval =T.Compose([ T.Resize((256,256)),

T.Transpose(),

T.Normalize(mean=[0, 0, 0], # 像素值归一化

std =[255, 255, 255]), # transforms.ToTensor(), # transpose操作 + (img / 255),并且数据结构变为PaddleTensor

T.Normalize(mean=[0.50950350, 0.54632660, 0.57409690],# 减均值 除标准差

std= [0.26059777, 0.26041326, 0.29220656])# 计算过程:output[channel] = (input[channel] - mean[channel]) / std[channel]

])

train_dataset = TowerDataset(mode='train',transforms=transform_train)

eval_dataset = TowerDataset(mode='eval', transforms=transform_eval )#数据异步加载train_loader = paddle.io.DataLoader(train_dataset,

places=paddle.CUDAPlace(0),

batch_size=16,

shuffle=True, #num_workers=2,

#use_shared_memory=True

)

eval_loader = paddle.io.DataLoader (eval_dataset,

places=paddle.CUDAPlace(0),

batch_size=16, #num_workers=2,

#use_shared_memory=True

)print('训练集样本量: {},验证集样本量: {}'.format(len(train_loader), len(eval_loader)))训练集样本量: 1309,验证集样本量: 328

import paddleimport paddle.nn as nndef conv_1x1_bn(inp, oup):

return nn.Sequential(

nn.Conv2D(inp, oup, 1, 1, 0, bias_attr=False),

nn.BatchNorm2D(oup),

nn.Silu()

)def conv_nxn_bn(inp, oup, kernal_size=3, stride=1):

return nn.Sequential(

nn.Conv2D(inp, oup, kernal_size, stride, 1, bias_attr=False),

nn.BatchNorm2D(oup),

nn.Silu()

)class PreNorm(nn.Layer):

def __init__(self, axis, fn):

super().__init__()

self.norm = nn.LayerNorm(axis)

self.fn = fn

def forward(self, x, **kwargs):

return self.fn(self.norm(x), **kwargs)class FeedForward(nn.Layer):

def __init__(self, axis, hidden_axis, dropout=0.):

super().__init__()

self.net = nn.Sequential(

nn.Linear(axis, hidden_axis),

nn.Silu(),

nn.Dropout(dropout),

nn.Linear(hidden_axis, axis),

nn.Dropout(dropout)

)

def forward(self, x):

return self.net(x)class Attention(nn.Layer):

def __init__(self, axis, heads=8, axis_head=64, dropout=0.):

super().__init__()

inner_axis = axis_head * heads

project_out = not (heads == 1 and axis_head == axis)

self.heads = heads

self.scale = axis_head ** -0.5

self.attend = nn.Softmax(axis = -1)

self.to_qkv = nn.Linear(axis, inner_axis * 3, bias_attr = False)

self.to_out = nn.Sequential(

nn.Linear(inner_axis, axis),

nn.Dropout(dropout)

) if project_out else nn.Identity() def forward(self, x):

q,k,v = self.to_qkv(x).chunk(3, axis=-1)

b,p,n,hd = q.shape

b,p,n,hd = k.shape

b,p,n,hd = v.shape

q = q.reshape((b, p, n, self.heads, -1)).transpose((0, 1, 3, 2, 4))

k = k.reshape((b, p, n, self.heads, -1)).transpose((0, 1, 3, 2, 4))

v = v.reshape((b, p, n, self.heads, -1)).transpose((0, 1, 3, 2, 4))

dots = paddle.matmul(q, k.transpose((0, 1, 2, 4, 3))) * self.scale

attn = self.attend(dots)

out = (attn.matmul(v)).transpose((0, 1, 3, 2, 4)).reshape((b, p, n,-1)) return self.to_out(out)class Transformer(nn.Layer):

def __init__(self, axis, depth, heads, axis_head, mlp_axis, dropout=0.):

super().__init__()

self.layers = nn.LayerList([]) for _ in range(depth):

self.layers.append(nn.LayerList([

PreNorm(axis, Attention(axis, heads, axis_head, dropout)),

PreNorm(axis, FeedForward(axis, mlp_axis, dropout))

]))

def forward(self, x):

for attn, ff in self.layers:

x = attn(x) + x

x = ff(x) + x return xclass MV2Block(nn.Layer):

def __init__(self, inp, oup, stride=1, expansion=4):

super().__init__()

self.stride = stride assert stride in [1, 2]

hidden_axis = int(inp * expansion)

self.use_res_connect = self.stride == 1 and inp == oup if expansion == 1:

self.conv = nn.Sequential( # dw

nn.Conv2D(hidden_axis, hidden_axis, 3, stride, 1, groups=hidden_axis, bias_attr=False),

nn.BatchNorm2D(hidden_axis),

nn.Silu(), # pw-linear

nn.Conv2D(hidden_axis, oup, 1, 1, 0, bias_attr=False),

nn.BatchNorm2D(oup),

) else:

self.conv = nn.Sequential( # pw

nn.Conv2D(inp, hidden_axis, 1, 1, 0, bias_attr=False),

nn.BatchNorm2D(hidden_axis),

nn.Silu(), # dw

nn.Conv2D(hidden_axis, hidden_axis, 3, stride, 1, groups=hidden_axis, bias_attr=False),

nn.BatchNorm2D(hidden_axis),

nn.Silu(), # pw-linear

nn.Conv2D(hidden_axis, oup, 1, 1, 0, bias_attr=False),

nn.BatchNorm2D(oup),

) def forward(self, x):

if self.use_res_connect: return x + self.conv(x) else: return self.conv(x)class MobileViTBlock(nn.Layer):

def __init__(self, axis, depth, channel, kernel_size, patch_size, mlp_axis, dropout=0.):

super().__init__()

self.ph, self.pw = patch_size

self.conv1 = conv_nxn_bn(channel, channel, kernel_size)

self.conv2 = conv_1x1_bn(channel, axis)

self.transformer = Transformer(axis, depth, 1, 32, mlp_axis, dropout)

self.conv3 = conv_1x1_bn(axis, channel)

self.conv4 = conv_nxn_bn(2 * channel, channel, kernel_size)

def forward(self, x):

y = x.clone() # Local representations

x = self.conv1(x)

x = self.conv2(x)

# Global representations

n, c, h, w = x.shape

x = x.transpose((0,3,1,2)).reshape((n,self.ph * self.pw,-1,c))

x = self.transformer(x)

x = x.reshape((n,h,-1,c)).transpose((0,3,1,2)) # Fusion

x = self.conv3(x)

x = paddle.concat((x, y), 1)

x = self.conv4(x) return xclass MobileViT(nn.Layer):

def __init__(self, image_size, axiss, channels, num_classes, expansion=4, kernel_size=3, patch_size=(2, 2)):

super().__init__()

ih, iw = image_size

ph, pw = patch_size assert ih % ph == 0 and iw % pw == 0

L = [2, 4, 3]

self.conv1 = conv_nxn_bn(3, channels[0], stride=2)

self.mv2 = nn.LayerList([])

self.mv2.append(MV2Block(channels[0], channels[1], 1, expansion))

self.mv2.append(MV2Block(channels[1], channels[2], 2, expansion))

self.mv2.append(MV2Block(channels[2], channels[3], 1, expansion))

self.mv2.append(MV2Block(channels[2], channels[3], 1, expansion)) # Repeat

self.mv2.append(MV2Block(channels[3], channels[4], 2, expansion))

self.mv2.append(MV2Block(channels[5], channels[6], 2, expansion))

self.mv2.append(MV2Block(channels[7], channels[8], 2, expansion))

self.mvit = nn.LayerList([])

self.mvit.append(MobileViTBlock(axiss[0], L[0], channels[5], kernel_size, patch_size, int(axiss[0]*2)))

self.mvit.append(MobileViTBlock(axiss[1], L[1], channels[7], kernel_size, patch_size, int(axiss[1]*4)))

self.mvit.append(MobileViTBlock(axiss[2], L[2], channels[9], kernel_size, patch_size, int(axiss[2]*4)))

self.conv2 = conv_1x1_bn(channels[-2], channels[-1])

self.pool = nn.AvgPool2D(ih//32, 1)

self.fc = nn.Linear(channels[-1], num_classes, bias_attr=False) def forward(self, x):

x = self.conv1(x)

x = self.mv2[0](x)

x = self.mv2[1](x)

x = self.mv2[2](x)

x = self.mv2[3](x) # Repeat

x = self.mv2[4](x)

x = self.mvit[0](x)

x = self.mv2[5](x)

x = self.mvit[1](x)

x = self.mv2[6](x)

x = self.mvit[2](x)

x = self.conv2(x)

x = self.pool(x)

x = x.reshape((-1, x.shape[1]))

x = self.fc(x) return xdef mobilevit_xxs():

axiss = [64, 80, 96]

channels = [16, 16, 24, 24, 48, 48, 64, 64, 80, 80, 320] return MobileViT((256, 256), axiss, channels, num_classes=1000, expansion=2)def mobilevit_xs():

axiss = [96, 120, 144]

channels = [16, 32, 48, 48, 64, 64, 80, 80, 96, 96, 384] return MobileViT((256, 256), axiss, channels, num_classes=1000)def mobilevit_s():

axiss = [144, 192, 240]

channels = [16, 32, 64, 64, 96, 96, 128, 128, 160, 160, 640] return MobileViT((256, 256), axiss, channels, num_classes=100)def count_parameters(model):

return sum(p.numel() for p in model.parameters() if p.requires_grad)W1114 16:52:06.385679 263 device_context.cc:447] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 10.1, Runtime API Version: 10.1 W1114 16:52:06.390952 263 device_context.cc:465] device: 0, cuDNN Version: 7.6. /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/paddle/nn/layer/norm.py:653: UserWarning: When training, we now always track global mean and variance. "When training, we now always track global mean and variance.")

[5, 1000] [5, 1000] [5, 100]

if __name__ == '__main__':

img = paddle.rand([5, 3, 256, 256])

vit = mobilevit_xxs()

out = vit(img) print(out.shape)

vit = mobilevit_xs()

out = vit(img) print(out.shape)

vit = mobilevit_s()

out = vit(img) print(out.shape)

model = mobilevit_s() model = paddle.Model(model)In [ ]

#优化器选择class S*eBestModel(paddle.callbacks.Callback):

def __init__(self, target=0.5, path='work/best_model2', verbose=0):

self.target = target

self.epoch = None

self.path = path def on_epoch_end(self, epoch, logs=None):

self.epoch = epoch def on_eval_end(self, logs=None):

if logs.get('acc') > self.target:

self.target = logs.get('acc')

self.model.s*e(self.path) print('best acc is {} at epoch {}'.format(self.target, self.epoch))

callback_visualdl = paddle.callbacks.VisualDL(log_dir='work/no_SA')

callback_s*ebestmodel = S*eBestModel(target=0.5, path='work/best_model1')

callbacks = [callback_visualdl, callback_s*ebestmodel]

base_lr = config_parameters['lr']

epochs = config_parameters['epochs']def make_optimizer(parameters=None):

momentum = 0.9

learning_rate= paddle.optimizer.lr.CosineAnnealingDecay(learning_rate=base_lr, T_max=epochs, verbose=False)

weight_decay=paddle.regularizer.L2Decay(0.0001)

optimizer = paddle.optimizer.Momentum(

learning_rate=learning_rate,

momentum=momentum,

weight_decay=weight_decay,

parameters=parameters) return optimizer

optimizer = make_optimizer(model.parameters())

model.prepare(optimizer,

paddle.nn.CrossEntropyLoss(),

paddle.metric.Accuracy())

model.fit(train_loader,

eval_loader,

epochs=20,

batch_size=1, # 是否打乱样本集

callbacks=callbacks,

verbose=1) # 日志展示格式

model_2 = paddle.vision.models.MobileNetV2(num_classes=10model_2 = paddle.Model(model_2)In [ ]

#优化器选择class S*eBestModel(paddle.callbacks.Callback):

def __init__(self, target=0.5, path='work/best_model2', verbose=0):

self.target = target

self.epoch = None

self.path = path def on_epoch_end(self, epoch, logs=None):

self.epoch = epoch def on_eval_end(self, logs=None):

if logs.get('acc') > self.target:

self.target = logs.get('acc')

self.model.s*e(self.path) print('best acc is {} at epoch {}'.format(self.target, self.epoch))

callback_visualdl = paddle.callbacks.VisualDL(log_dir='work/mobilenet_v2')

callback_s*ebestmodel = S*eBestModel(target=0.5, path='work/best_model')

callbacks = [callback_visualdl, callback_s*ebestmodel]

base_lr = config_parameters['lr']

epochs = config_parameters['epochs']def make_optimizer(parameters=None):

momentum = 0.9

learning_rate= paddle.optimizer.lr.CosineAnnealingDecay(learning_rate=base_lr, T_max=epochs, verbose=False)

weight_decay=paddle.regularizer.L2Decay(0.0001)

optimizer = paddle.optimizer.Momentum(

learning_rate=learning_rate,

momentum=momentum,

weight_decay=weight_decay,

parameters=parameters) return optimizer

optimizer = make_optimizer(model.parameters())

model_2.prepare(optimizer,

paddle.nn.CrossEntropyLoss(),

paddle.metric.Accuracy())

In [ ]

model_2.fit(train_loader,

eval_loader,

epochs=10,

batch_size=1, # 是否打乱样本集

callbacks=callbacks,

verbose=1) # 日志展示格式

以上就是Mobile-ViT:改进的一种更小更轻精度更高的模型的详细内容,更多请关注其它相关文章!

# ai

# python

# 中文网

# 更高

# type

# fig

# udio

# red

# cos

# 异步加载

# 昌都SEO

# 淘宝无线端营销推广流程

# 昆明做网站建设费用预算

# 佛山网站建设哪家快些啊

# 天河灯饰网站建设

# 焦作短视频seo公司

# 岚县网站推广什么价格

# 寿阳seo快排

# csgo开箱网站推广号是多少

# 甘南口碑营销推广公司

# 自己的

# 官网

# 数据结构

# 网上

# 购物系统

# 实现了

# 一言

# 更小

相关栏目:

【

行业新闻62819 】

【

科技资讯67470 】

相关推荐:

选对AI智能写作软件,让创作游刃有余!

AI取代人工先拿教育行业开刀?美版“作业帮”启动裁员

好莱坞面临全面停摆 好莱坞大罢工抵制“AI入侵”

传字节内测对话式 AI 产品,代号「Grace」;马斯克嘲讽苹果 头显;比亚迪 F 品牌定名「方程豹」

报告称 70% 程序员已使用各种 AI 工具编程

海柔创新携手SAP,以机器人技术助力全球客户升级数智化竞争力

WHEE使用教程

“图壤·阅读元宇宙”亮相北京国际图书博览会

城市在采用人工智能方面进展如何?

【搞事】时隔4年 谷歌更新安卓logo 机器人头更饱满了

机构研选 | 虚拟电厂是电力物联网升级版 智能电网望迎来高速发展

微软向美国政府提供GPT的大模型,安全性如何保证?

OpenAI CEO 阿尔特曼到访日本,对全球 AI 协调合作表示乐观

朱民:普通人炒股炒不过机器人是很正常的 AI已经能理解市场情绪

沐曦首款AI推理GPU亮相:INT8算力达160TOPS!

云南首例达芬奇机器人微创心脏手术成功开展

30+大模型齐聚,大模型成世界人工智能大会“顶流”

如布AI口袋学习机S12 将亮相综艺节目《好样的!国货》

科技赋能司法执行 阿里资产免费为全国法院升级VR新服务

Unity发布Sentis和Muse AI工具,助力创作游戏和3D内容

英伟达首席执行官黄仁勋:生成式 AI 时代「人类」会是新的编程语言

GPT-4 模型架构泄露:包含 1.8 万亿参数、采用混合专家模型

Spotify计划推出AI驱动的音乐播放器功能

探索人工智能在居家养老方面的应用

统信深度deepin成立 AI SIG 社区,共同提升 Linux 下 AI 体验

谷歌在人工智能领域没有“护城河”?

OpenAI 引入个性化指令功能,消除对话中的重复偏好与信息

微幼科技推出全自动晨检机器人,助力幼儿园校园健康检测

塑造全能智能管家:华为小艺AI加成应对大模型挑战

今年,全球客服中心支出将增长 16.2%,迎接对话式 AI 的浪潮,根据 Gartner 报告

AI生成新闻网站数量激增,正在疯狂赚取广告收入

甲骨文与Cohere合作为企业提供生成式人工智能服务

探索AI前沿理念 2025全球人工智能技术大会在杭州开幕

从数据中心到发电站:人工智能对能源使用的影响

生成式人工智能来了,如何保护未成年人? | 社会科学报

Xreal AR 眼镜用投屏盒子 Beam 发布:分体式设计,到手 699 元

寻求能源转型最优解

微软推出人工智能模型 CoDi,可互动和生成多模态内容

数据显示:人工智能相关专业热度上升最快 考古、美术、生物医学工程等小众专业火了

华为发布两款AI存储新品

创新全场景清洁方案!海尔商用机器人首发上市

“思享荟”沙龙热议AIGC与元宇宙 复旦大学赵星畅谈深度数字化

鹅厂机器狗抢起真狗「饭碗」!会撒欢儿做游戏,遛人也贼6

眼球反射解锁3D世界,黑镜成真!马里兰华人新作炸翻科幻迷

微软在 Build 大会上宣布的新 Microsoft Store AI Hub 现已开始推出

开创全新虚拟现实体验的Pimax Crystal VR头显

OpenAI CEO 山姆・阿尔特曼呼吁 AI 领域中美应当合作

机构:边缘AI或是当前预期差最大的AI方向

华为昇腾AI原生支持30多种基础大模型,包括GPT

OpenAI更新GPT-4等模型,新增API函数调用,价格最高降75%